DVI vs VGA – What’s the Difference?

Digital data transfer in the world of display technology helped revolutionize how we view content. Connectors and interfaces changed over the years, the most notable change in recent years being the one from VGA to DVI.

DVI almost entirely moved to a digital signal, making it a milestone in display technology, both on the sending and receiving side of the signal. Here is everything that you should know about the two interfaces and connectors.

DVI vs VGA – The Basics

The first thing to understand about the two technologies is that one sends analog signals, namely VGA, and the second one can send both analog and digital signals, depending on the connector. DVI was made with the idea of moving to a digital signal but with legacy support.

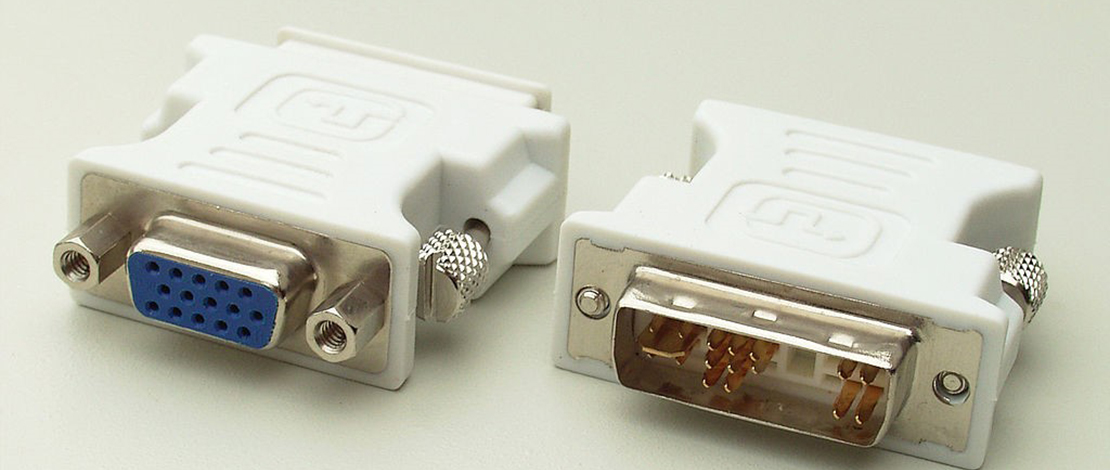

In the early 2000s, most monitors had a VGA port so when DVI was introduced, it was done in a way that made reusing existing gear easier. Instead of active adapters, passive ones could work, in some cases. DVI offered upgrades in all cases, making it the go-to standard once the market was full of devices that supported it.

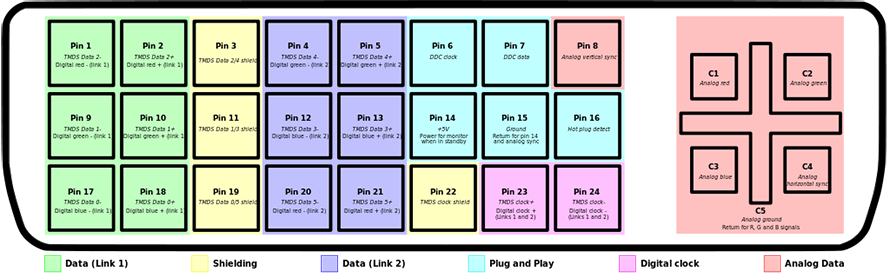

DVI has a different pinout (not all DVI connectors have this layout, this is dual-link DVI-I)

VGA vs DVI Resolution – Digital Wins, Right?

Resolution was and still is an important factor in choosing which interface you will use, as well as which devices to pair. Resolution seems to be the limitation for VGA, right? Well, not really. The VGA connector can relay technically any resolution, however, the quality of that will depend on the cable quality and length.

Given that it’s an analog signal, the cable plays a vital role in regard to signal and image quality. The sender has to boost the signal sufficiently for it to make the distance to the receiver. The higher the resolution, the better the cable should be.

When it comes to DVI, it also has limitations, such as cable length. While it doesn’t send an analog signal, the TMDS clock frequency can deteriorate with cable length. That means that signal repeaters would be necessary for long cables.

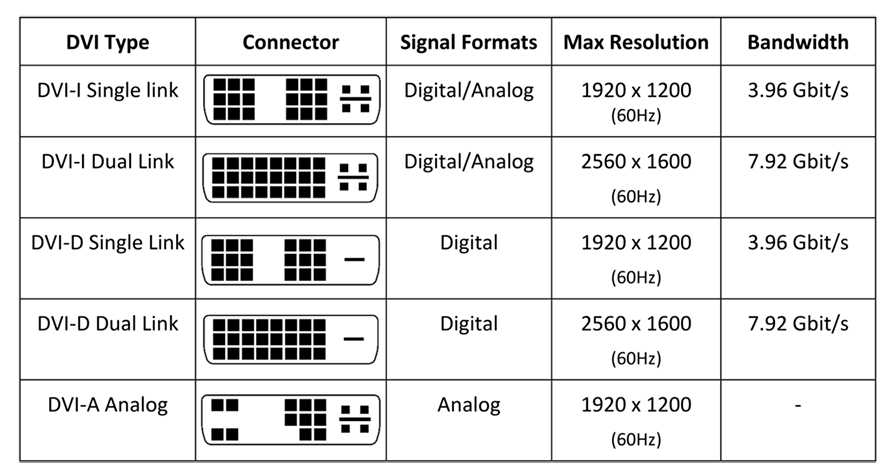

DVI can output a resolution of 2560 x 1600 maximum, so theoretically, VGA wins, though you would have to have special displays, and graphics cards, not to mention cables. DVI simplified things but in terms of resolution, VGA could win in theory.

DVI isn’t that complicated, though it realistically didn’t need three variations.

DVI vs VGA Quality – Image Quality is Important

Old cathode ray tube monitors were power-hungry and literally shot electrons toward your eyes. Replaced by LCD monitors, some parts of image quality were improved. Colors and brightness were notable improvements at first.

They were traded off for speed, latency, and motion blur performance. CRT monitors still have better motion blur performance compared to LCD screens. The only ones that perform better or up to speed are OLED screens.

In this regard, DVI should offer better image quality, but only if paired with a monitor that actually has great image quality. CRT screens typically use VGA connectors. CRT screens also had great viewing angles, which cannot be said of LCD screens.

Both were completely made obsolete by modern hardware in a time period between 2010 and 2016, replaced by HDMI and DisplayPort.

VGA Quality

VGA quality could suffer because of bad monitors, cables, graphics cards, or any other device that sends the signal. A good monitor could have a bad-quality image with artifacts if the cable is too long or is poor quality.

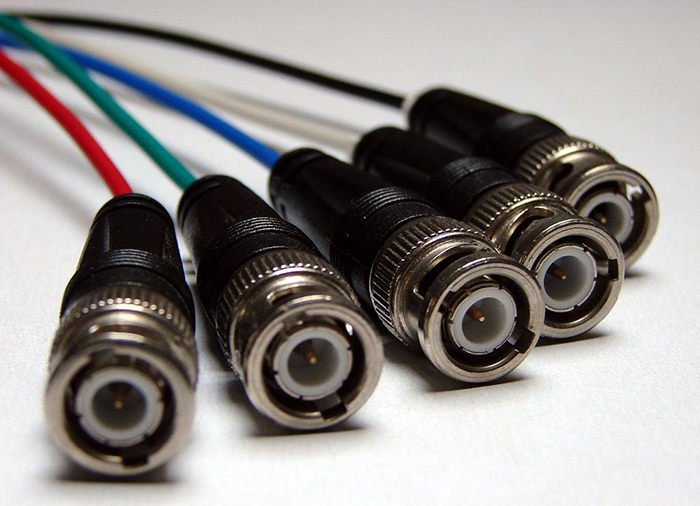

VGA boosters can boost the signal and extend the cable range, yet channel crosstalk is also a possible issue if the cables are not properly isolated. BNC coaxial cables can be used, though BNC connectors were not present on all but the highest-quality monitors.

BNC VGA connectors add quality and length to VGA.

DVI Quality

DVI quality could also suffer from cable length, but it is easier to boost and has fewer issues inherently. DVI can also transfer an analog signal, so monitors with a VGA connector would also get a decent signal.

The digital-to-analog conversion requires a DVI-I or DVI-A connector, otherwise, you cannot send the signal with just a passive adapter. Active adapters could solve the issue, but that would be an additional complication. Of course, for the best quality, DisplayPort and HDMI are the solutions.

Apple always did things differently, hence the mini DVI connector, designed by Apple.

DVI Cable vs VGA

As stated, the DVI cable should be better than VGA cables at transmitting data, however, there are limitations. The limitation is that the resolution gets lower the longer the cables get. 1080p is good up to 4.5 meters in length.

Going to 15 meters drops the resolution to 1280 x 1024. The problem with larger distances is that the TDMS clock speed is not stable without boosters. Boosters are implemented for longer distances. They have to be powered, which is similar to VGA, though a different technology.

VGA deals with other issues such as signal degradation over a longer cable, due to impedance. You could boost the signal, but the reality is that longer distances with VGA cables are troublesome and that the cables can make or break the image quality.

A quality VGA cable has to be properly insulated to prevent crosstalk and interference from the outside, even. Thus, for higher resolutions, the cables should be as short as possible, while at the same time, being built to a high standard.

DVI Port vs VGA

The DVI connector has three variants, the DVI-D, which is digital only, the DVI-I, which carries both digital and analog signals, and the DVI-A, which is analog only. VGA, on the other hand, most commonly comes in the 15-pin D-subminiature.

DVI-I and DVI-D also come in dual link variants, which have more pins and can carry more of the digital signal. This translates to a higher resolution, or refresh rate, depending on the preference. VGA comes in its standard mode, with the mini VGA for Apple computers of the time.

A quality VGA cable and connector.

Conclusion

DVI and VGA have their limitations though the digital part of DVI has its benefits. However, most older CRT monitors run VGA, so if you are interested in that, you will likely need appropriate adapters or cards that have VGA out.

Given that both DVI and VGA are obsolete and that they have been surpassed by HDMI and DisplayPort, for most modern users, choosing either of the two when they are properly supported is a much better solution.